Hi All,

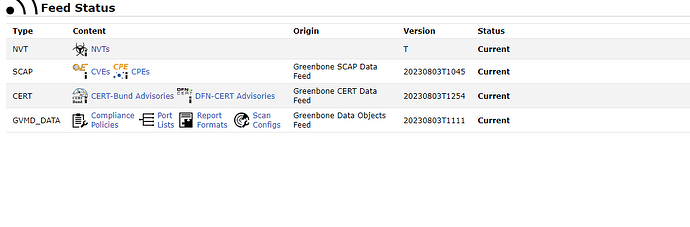

Sorry if this a noob question. I have used the setup-and-start-greenbone-community-edition.sh to get the system up and running. However it is not not pulling/updating the NVT database. I just get a “T” for the version number.

I do see the workfrow doc to run a command to update the feeds and then copy the data to the volume. But I does that work if i used the startup script. I dont see the yml file to then run docker-compose with.

Any help or pointers would be great on this.

Thank you.

I don’t know of any script named setup-and-start-greenbone-community-edition.sh that is officially released from Greenbone. Where did it come from?

Thank you for responding back to me. The script is form the Greenbone Community Containers 22.4 - Greenbone Community Documentation. The download link for the script is https://greenbone.github.io/docs/latest/_static/setup-and-start-greenbone-community-edition.sh.

Anyway. I was able to find the yml file. Is their a way to delete the NVT database and have the system redownload from scratch.

Ok, sorry I forgot about that script.

You said:

Anyway. I was able to find the yml file. Is their a way to delete the NVT database and have the system redownload from scratch?

I don’t know that you need to delete the NVT database and re-download it again. The Docker containers can take several hours to load all the NVT data into the PostgreSQL database the first time your pull and create the containers. So, in other words, you shouldn’t expect the feedstatus page to show the NVT data until it has been parsed and loaded.

If after waiting for perhaps 24 hours, the NVT feed data has not loaded then you can try to pulling the feeds again as per the Docker containers workflow such as:

docker-compose -f $DOWNLOAD_DIR/docker-compose.yml -p greenbone-community-edition pull notus-data vulnerability-tests scap-data dfn-cert-data cert-bund-data report-formats data-objects

docker-compose -f $DOWNLOAD_DIR/docker-compose.yml -p greenbone-community-edition up -d notus-data vulnerability-tests scap-data dfn-cert-data cert-bund-data report-formats data-objects

But as the Workflow documentation says:

When feed content has been downloaded, the new data must be loaded by the corresponding daemons. This may take several minutes up to hours, especially for the initial loading of the data. Without loaded data, scans will contain incomplete and erroneous results.

So, I think your feeds are just not ready yet.

thank you for the email back. I did try the 2 commands and still getting the weird NVT version ID. Anything else you can think so. I know this is a weird one.

I believe that is the normal reading of the Feed Status. At least, that’s what my docker containers also read.

EDIT: Ok, I adjust my answer. My docker container says the same thing, but I’m not sure it’s supposed to be that way.